Every enterprise AI project eventually hits the same problem: the data that makes an LLM genuinely useful (real customer records, support transcripts, transaction histories, health notes) is exactly the data you are not supposed to feed a model.

Not because AI is inherently insecure. But because most enterprise AI pipelines were not designed with a clear answer to a simple question: at what point does sensitive customer data stop being protected, and start being model input?

The gap between those two states, between data sitting safely in a vault and data flowing through a prompt builder, a vector store, or a fine-tuning job, is where PII leaks happen. And they happen in ways that are much harder to audit or reverse than a database breach, because the data is now in model weights.

This post walks through where PII exposure happens in AI pipelines, why standard encryption isn’t enough, and how a vault-first tokenization approach keeps LLMs useful without handing them the keys to your customer data.

Why AI Pipelines Are a New Category of PII Risk

Traditional data security thinking focuses on storage and transit: encrypt the database, secure the API, audit the logs. AI pipelines introduce a third exposure surface that most security frameworks have not caught up with: the model itself.

When a language model is fine-tuned on data containing real names, Aadhaar numbers, phone numbers, or financial details, that information is not simply processed and discarded. It is encoded, imperfectly and persistently, into the model’s weights. Research has consistently demonstrated that sufficiently capable models can reproduce training data verbatim under adversarial prompting, a property known as memorisation.

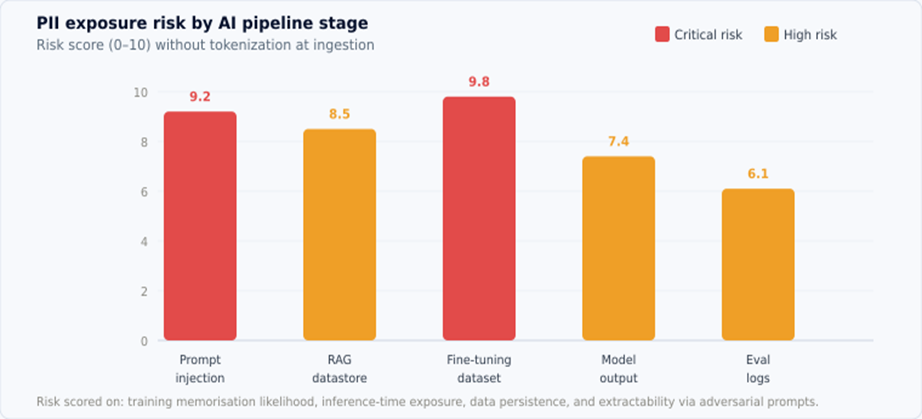

Fig 1: PII exposure risk score by AI pipeline stage (0-10, without tokenization at ingestion). Fine-tuning datasets and prompt injection carry the highest risk.

Even without fine-tuning, retrieval-augmented generation (RAG) systems regularly inject raw PII into prompts at inference time. The model does not store it permanently, but the prompt and the model’s output may contain sensitive customer data that is logged, cached, or sent to third-party services as part of the inference stack.

The risk is not theoretical. Several public incidents have involved LLMs reproducing customer data from training sets in response to carefully crafted prompts. For Indian enterprises handling Aadhaar-linked identifiers, UPI transaction history, and health records, the regulatory and reputational consequences of this class of exposure are severe.

| THE CORE PROBLEM Encryption protects data at rest and in transit. It does not protect data that has been ingested into a model. Once PII enters a fine-tuning dataset or a RAG datastore as plaintext, the protection boundary has already been crossed. |

The Five Stages Where PII Enters AI Pipelines

Understanding where the exposure happens is the first step to preventing it. In a typical enterprise AI deployment, there are five distinct points where PII can enter the pipeline:

1. Prompt construction: Prompt construction

A service retrieves customer records to build context for a support or analysis prompt. Raw customer fields (name, phone, account number) are injected directly into the prompt string.

2. RAG datastore ingestion: RAG datastore ingestion

Documents or records containing PII are embedded and stored in a vector database. Every retrieval operation pulls chunks that may contain real customer identifiers alongside the semantic content.

3. Fine-tuning datasets: Fine-tuning datasets

Customer interaction data (support transcripts, chat logs, email threads) is used to fine-tune a model for domain-specific behaviour. PII in the training data can be memorised by the model.

4. Model outputs and responses: Model outputs and responses

The model reproduces customer data from context or memory in its response. This output is then logged, stored, or forwarded through downstream services that may not have appropriate access controls.

5. Evaluation and logging: Evaluation and logging

Prompt-response pairs are logged for model evaluation, quality review, or debugging. These logs often contain PII from prompts and responses and are stored with weaker controls than production databases.

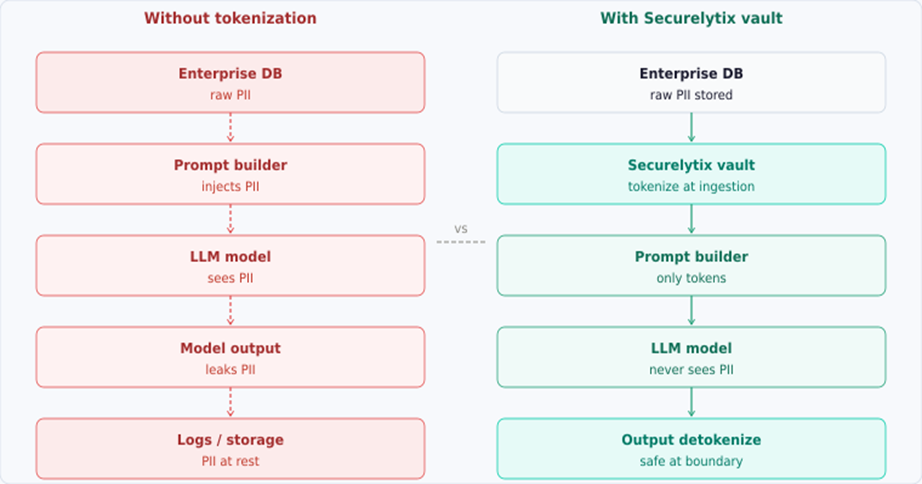

Fig 2: AI pipeline without tokenization (left) vs. with Securelytix vault (right). Tokenization at ingestion means PII never reaches the model.

Why Encryption Alone Does Not Solve This

The instinctive response to data security concerns is encryption, and encryption is genuinely important. But it solves a different problem.

Encryption protects data at rest: it ensures that the raw bytes sitting in a database or object store are unreadable without the key. The moment you decrypt that data to build a prompt, it is in plaintext in memory, and from that point forward, the standard encryption story has nothing more to say.

In an AI pipeline, decryption happens very early, at the retrieval step, before data enters the prompt builder. Everything downstream of that point (the prompt string, the model input, the model output, the evaluation log) is unprotected by encryption.

| THE ENCRYPTION GAP Encrypting your customer database does not prevent your LLM from memorising a customer’s Aadhaar number. The model ingests the plaintext value after decryption, and from that moment the encryption layer is irrelevant to what the model knows. |

What is needed is a mechanism that keeps the plaintext value out of the pipeline entirely, replacing it with something the model can reason about structurally, without the model ever seeing the real value. That is what tokenization does.

Tokenization at Ingestion: The Right Protection Boundary

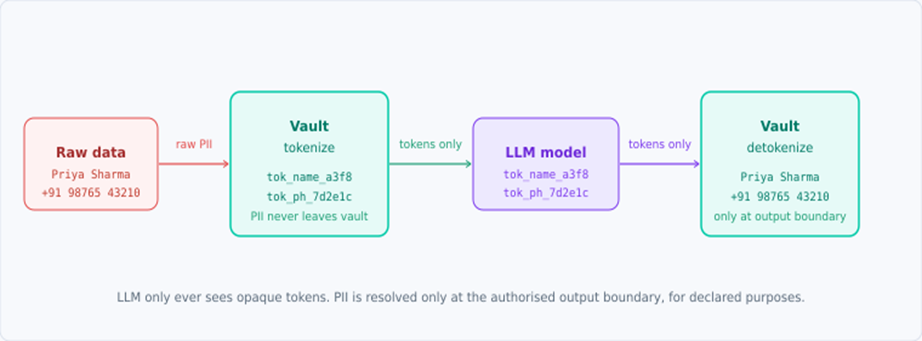

The right place to establish the protection boundary is at the point of ingestion, before data enters any AI pipeline component. Tokenization replaces sensitive field values with opaque, meaningless tokens at this boundary. The token preserves the structural position of the data (it occupies the same field slot, the same position in the document) but carries no recoverable information about the original value.

A tokenized training record looks like this:

| // Original customer support transcript “Customer Priya Sharma, Aadhaar 4567 8901 2345, called on 14 March to report a failed UPI transaction on account ending 4892.” // After Securelytix tokenization at ingestion boundary “Customer tok_name_a3f8c9, tok_id_7d2e1c4b, called on 14 March to report a failed UPI transaction on account ending tok_acc_3f9a2b.” |

The model learns the structure, the business logic, and the conversational patterns from this record. That is all you actually need it to learn. It does not learn the customer’s real name, their Aadhaar number, or their account identifier, because those values were never in the training data.

Fig 3: Tokenization flow through an LLM pipeline. The vault tokenizes at ingestion and detokenizes only at the authorised output boundary.

For RAG and inference-time pipelines, the same principle applies: tokens are stored in the vector datastore, tokens are retrieved into prompts, and the model reasons over tokens. Detokenization happens at the output boundary, for authorised callers, for declared purposes, exactly as in the zero-trust data access pattern.

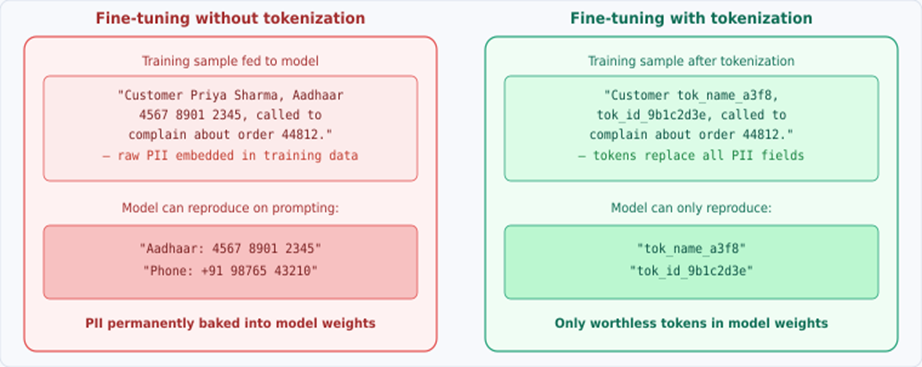

What the Model Actually Memorises

The difference in what a model encodes during fine-tuning, with and without tokenization, is not subtle.

Fig 4: What gets baked into model weights: real PII vs opaque tokens. Only the tokenized approach keeps customer data out of the model permanently.

With tokenization, the worst outcome from a compromised or adversarially probed model is the reproduction of a token like tok_name_a3f8c9. That token is meaningless without access to the vault’s token-to-value mapping, which is protected by the same mutual TLS and scoped-access controls described in our zero-trust architecture post.

Without tokenization, the worst outcome is the verbatim reproduction of a customer’s Aadhaar number, bank account details, or health record in response to a carefully crafted prompt. That is not a theoretical risk. It has happened in production systems.

Deterministic Tokens: Keeping the Model Coherent

A common concern with tokenization for AI is coherence: if the same customer appears multiple times in training data or in a RAG retrieval, will the model understand that tok_name_a3f8c9 in one document refers to the same entity as tok_name_a3f8c9 in another?

Yes, provided the tokenization is deterministic. Deterministic tokenization means the same input value always maps to the same output token. Priya Sharma always becomes tok_name_a3f8c9, across every document, every pipeline run, every retrieval. The model can track entity identity, learn relationship patterns, and reason about customer behaviour, without ever knowing the customer’s real name.

This is a critical distinction from random tokenization, where the same value maps to a different token on each call. Random tokenization would break the model’s ability to reason about the same entity across documents. Deterministic tokenization preserves that capability while eliminating PII exposure.

| DETERMINISTIC VS RANDOM TOKENIZATION Use deterministic tokenization for AI pipelines. The same input always produces the same token, so the model can track entities consistently across documents. Random tokenization destroys entity coherence and makes the model useless for customer-level reasoning. |

Output Boundary: Detokenization for Authorised Use Cases

Tokenization at ingestion solves the model memorisation problem. But enterprise AI systems also need to produce outputs that contain real customer information: a support agent needs to see the customer’s actual name, a notification system needs to send to the real phone number.

This is where the output boundary detokenization pattern matters. The model produces an output containing tokens. That output is passed to the vault, which resolves tokens to real values, but only for:

• Callers with a valid mTLS certificate provisioned for detokenization access

• Declared purposes that match the vault’s access policy for that data type

• Specific token values that the caller is authorised to resolve

• Within a time-limited, scoped access token, not an open-ended credential

The result is that the same AI system can power a support agent (who is authorised to see the customer’s name and phone number), an analytics dashboard (which sees only aggregate, non-identified patterns), and a compliance report (which sees only what the compliance team is permitted to see) from the same underlying model, with the access control enforced at the output boundary, not inside the model.

Frequently Asked Questions

Q: What does ‘PII in AI pipelines’ mean, and why is it a problem?

A: PII in AI pipelines refers to sensitive customer data: names, Aadhaar numbers, phone numbers, and financial details, that flow into AI system components: prompt builders, vector stores, fine-tuning datasets, and model outputs. The problem is that unlike a database breach, exposure in an AI pipeline can be permanent: once a model is fine-tuned on data containing real PII, that information may be encoded in the model’s weights and reproducible under adversarial prompting. Standard encryption does not address this because the data is decrypted before it enters the model.

Q: Can an LLM actually remember and reproduce customer PII?

A: Yes. Research in model memorisation has consistently shown that language models can reproduce verbatim sequences from their training data, particularly for data that appears repeatedly or in distinctive formats. Structured identifiers like phone numbers, Aadhaar numbers, and account numbers are especially susceptible because they have a distinctive pattern that models can learn to reproduce. Several public incidents have demonstrated this in production systems. The risk scales with model size and training data repetition.

Q: How is tokenization for AI different from tokenization in databases?

A: The principle is the same: replace sensitive values with opaque tokens. But AI pipelines have an additional requirement: determinism. In a database context, you can use random tokenization (different token each call) because you only need to look up the value by token ID. In an AI pipeline, the model needs to track entity identity across multiple documents. Deterministic tokenization ensures the same customer always maps to the same token, so the model can reason about entity relationships without ever seeing real PII.

Q: Does tokenization break RAG retrieval quality?

A: No, if done correctly. Semantic search and embedding quality depend on the context words around a value, not the sensitive value itself. A support transcript about a payment dispute retains its semantic meaning whether the customer is named ‘Priya Sharma’ or ‘tok_name_a3f8c9’. The embedding model encodes the topic, sentiment, and business context, which is what you want to retrieve. In practice, tokenized RAG systems perform comparably to non-tokenized ones for semantic retrieval while eliminating PII exposure in the vector store.

Q: What happens if the vault goes down? Does the AI system stop working?

A: Tokenization at ingestion only requires vault availability when data enters the pipeline, not at every inference call. For RAG retrieval and prompt construction using pre-tokenized data, the vault is not in the critical inference path. Output detokenization does require vault availability, but this can be designed with caching and fallback patterns. Securelytix is engineered for high availability with sub-5ms P99 latency on token resolution, and supports batch resolution for high-throughput output pipelines.

Q: Is this relevant for enterprises using third-party LLM APIs like OpenAI or Gemini?

A: Especially so. When you send a prompt to a third-party API, the data in that prompt is outside your control boundary. If that prompt contains raw customer PII, you have effectively sent sensitive customer data to a third party, with uncertain data retention, logging, and training policies. Tokenizing before the API call means the third party receives tokens, not real customer data. Even if the third party logs prompts indefinitely or uses them for model improvement, they have no customer data to leak.

How Securelytix Approaches This

Implementing safe AI pipelines for enterprise data requires the same underlying infrastructure as zero-trust data access: a vault that tokenizes at the right boundary, enforces access policy at the output, and logs everything. Securelytix was built for this architecture from the ground up.

A few things worth knowing about how it works in practice for AI pipelines:

| Pre-ingestion tokenization | PII is replaced with tokens before it touches any AI pipeline component: prompt builder, vector store, or fine-tuning dataset. The model never trains on or processes real identifiers. |

| Purpose-scoped detokenization | Outputs are detokenized only at the authorised boundary, only for declared purposes. A support summary can be detokenized for the agent; the same data is blocked for analytics exports. |

| Consistent tokens across pipelines | Deterministic tokenization means the same customer appears as the same token across RAG retrieval, prompt context, and output, so the model can reason coherently without ever seeing the real value. |

| Audit log on every resolution | Every detokenization at the output boundary is logged with the calling system identity, declared purpose, and outcome. You have a full record of what the AI revealed to whom. |

| On-premise deployment | The vault runs inside your infrastructure. No customer data transits Securelytix systems, which matters especially for health records, financial data, and government-issued identifiers. |

The key architectural point is that Securelytix sits at the boundary between your data infrastructure and your AI infrastructure, not inside either. It does not require changes to your model training pipeline or your inference stack. You tokenize before data enters the AI layer, and you detokenize after output exits it. Everything in between is a safe zone.

Architecture documentation and integration guides are available at securelytix.tech/architecture.

Closing: The Boundary You Need to Define

Every enterprise AI project eventually confronts the same question: where does the protection boundary sit? The answer that most teams arrive at, encrypting the database and hoping the AI layer handles the rest, is not an answer. It is a gap.

The boundary needs to be defined explicitly, at the point of ingestion, before data touches any model component. Tokenization is the mechanism that enforces it. Not as a post-processing step, not as an output filter, but as a structural property of how data enters the AI system.

Models trained on tokenized data cannot reproduce customer PII, because they never saw it. RAG systems retrieving tokenized documents cannot expose customer identifiers in prompts, because the identifiers are not there. Output detokenization at a policy-controlled boundary means the right people get the right data, and the audit log proves it.

For Indian enterprises building AI systems on top of customer data (whether that is a support co-pilot, a fraud detection model, or a personalisation engine), the question is not whether to protect PII in the pipeline. It is where to put the boundary.

#GenAI #DataPrivacy #LLM #CyberSecurity #Securelytix #EnterpriseAI #PII #DataProtection #RAG #InfoSec