The most dangerous assumption in enterprise software isn’t a bug in your code. It’s this: if a request came from inside the network, it can be trusted.

This assumption has persisted across decades of software architecture because it was convenient. It mostly worked until it catastrophically didn’t. Breaches at large enterprises almost always follow the same sequence: an attacker gains a foothold through a misconfigured microservice or a phished credential, then moves laterally through the system because inside the network, most doors are unlocked.

Microservices, Kubernetes, and multi-cloud deployments have dissolved the perimeter almost entirely. Your internal network now spans cloud regions, a CDN, an on-premise data centre, and a dozen vendor APIs. The perimeter is everywhere, which means it is effectively nowhere.

This post is about a different architecture – one where ambient access doesn’t exist, and every data operation is explicit, audited, and bounded in time.

The Problem with “Trust the Network”

In a traditional perimeter model, services inside the network can query databases freely. Breach one service and lateral movement is unimpeded – the attacker inherits all the access that service had, including tables it barely needed, because someone gave it broad database credentials years ago.

Fig : Average data breach cost in India (INR Crore). Zero-trust organisations report 40 – 50% lower costs. Source: IBM 2024.

What Zero-Trust Data Access Actually Means

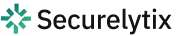

Zero-trust data access is a posture, not a product. The governing principle: access to sensitive data must be explicit, scoped, short-lived, and audited on every single operation.

No service gets ambient credentials. No service can read data it didn’t specifically request, for a specific business purpose, within a specific time window. The architecture enforces this through four interlocking components:

• Component 1: A vault boundary enforced with mutual TLS

• Component 2: Short-lived, scoped capability tokens

• Component 3: Just-in-time detokenization

• Component 4: Immutable audit logs that replace implicit trust

Component 1: The Vault Boundary with Mutual TLS

The first structural shift is introducing a data privacy vault – a dedicated service that sits in front of all sensitive data. Nothing reads PII directly from the database. Everything goes through the vault.

The vault’s outer boundary is enforced with mutual TLS (mTLS). Unlike standard TLS where only the server presents a certificate, mTLS requires both parties to authenticate. Every service must have been provisioned with a short-lived certificate issued by a trusted Certificate Authority – and the vault validates it cryptographically before honoring any request.

A certificate valid for four hours means a compromised credential has a strictly bounded damage window. Compare this to traditional service account credentials, valid for years and rotated manually – which is to say, rarely.

IMPLEMENTATION NOTE

Securelytix enforces mTLS at the network layer combined with service-identity allowlisting at the application layer. A valid cert from svc-analytics grants connection to the vault – but the policy engine still determines which data fields and operations that service is permitted, based on its declared purpose.

Component 2: Short-Lived Scoped Access Tokens

Once a service authenticates via mTLS, the vault issues a scoped capability token – an access grant that encodes precisely what the bearer is allowed to do, and for how long.

A token might specify:

• Who is requesting: svc-shipping

• What they can access: customer_address

• Why they need it (the purpose field): shipping_label_generation

• Which specific records: cust_44812

• Until when: Expires in 15 minutes

| jti: “tok_8f3a91c2d4e5” sub: “svc-shipping” exp: +15 minutes scope.operation: “read” scope.resource: “customer_address” scope.purpose: “shipping_label_generation” scope.record_ids: [“cust_44812”] |

The purpose field matters enormously. svc-analytics might be permitted to read customer addresses for aggregate analysis but blocked for individual profiling – even if the underlying data field is identical. Purpose-based access control is the difference between “access to a table” and “access for a reason.”

The 15-minute TTL means a stolen token has a hard expiry. No emergency credential rotation, no incident response at 3am. The token is simply already dead.

ENGINEERING TRADEOFF

Per-record token scoping creates overhead at scale. For high-throughput services, scope tokens by batch job or request context with tight TTLs. Securelytix supports batch token issuance natively – one vault call covers a bounded set of records for a declared job context.

Component 3: Just-in-Time Detokenization

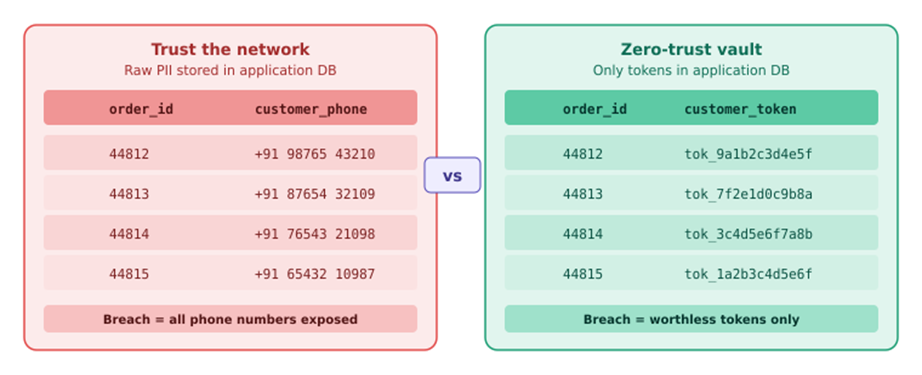

Instead of storing raw PII – Aadhaar numbers, phone numbers, bank account details – in application databases, you store opaque tokens. They are meaningless references that resolve to real values only inside the vault, only for authorized callers, only for declared purposes.

Fig : What lives in your application database: raw PII (left) vs opaque tokens (right). Breaching the tokenized DB yields nothing.

Detokenization happens just in time. The shipping service needs a customer’s address – it calls the vault, presents its scoped token, and receives back a single address value for that customer, for that purpose, for the next fifteen minutes. The raw value is used and discarded. It never sits in the service’s database, cache, logs, or S3 exports.

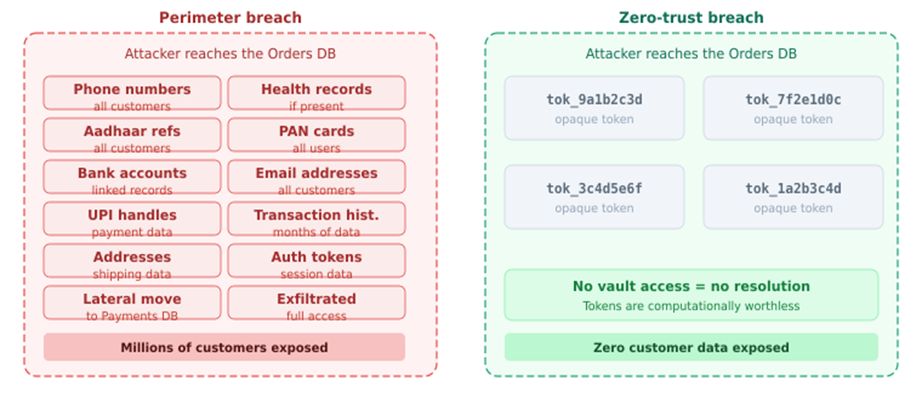

The Blast Radius Difference

Tokenization doesn’t prevent breaches. It contains their damage. An attacker who exfiltrates your orders database gets a million customer tokens – computationally worthless without vault access.

Fig : What an attacker gets: perimeter breach exposes real PII across categories; zero-trust breach exposes only worthless tokens.

THE BLAST RADIUS INSIGHT

A breach of a tokenized application database is categorically different from a breach of a raw-PII database. The attacker’s haul changes from millions of real customer records to millions of meaningless strings. The data itself never left the vault.

Component 4: Audit Logs That Replace Implicit Trust

In a trust-the-network model, trust is implicit. You don’t know which service read which customer record, when, or why. In a zero-trust model, the audit log is not optional instrumentation – it is the mechanism by which trust is verified.

Every vault operation emits a structured, tamper-evident event. This serves three functions:

1. Anomaly detection – if svc-analytics resolves ten thousand phone numbers at 2am on a Saturday, that is a detectable signal, not a silent leak.

2. Compliance demonstration – you have a complete, verifiable record of who accessed what and why, for any data audit.

3. Forensic reconstruction – when an incident occurs, you can trace the exact sequence of data accesses and what was exposed.

| { “event”: “detokenize”, “caller_service”: “svc-shipping”, “caller_cert_fingerprint”: “sha256:a3f8c9…”, “field_accessed”: “shipping_address”, “declared_purpose”: “shipping_label_generation”, “outcome”: “allowed”, “vault_latency_ms”: 3 } |

For logs to be trustworthy, they must be stored separately from the infrastructure they audit – append-only, with cryptographic chaining, in an isolated pipeline your application services cannot modify.

Trust the Network vs. Trust Nothing

| Dimension | Trust the network | Zero-trust layer |

| Default posture | Implicit – network membership grants access | Every access must be explicitly authorized |

| Credential lifetime | Long-lived, manually rotated | Minutes to hours, automatic |

| Breach blast radius | Entire database exposed | Attacker gets worthless tokens |

| Data at rest | Raw PII in DBs, logs, S3 exports | Only tokens in application layer |

| Audit trail | Reconstructed from logs if lucky | Every operation logged with full context |

| Insider threat | Engineer reads any customer record | Engineers cannot read raw PII directly |

The Engineering Tradeoffs

What you gain

• Bounded breach blast radius – a compromised service cannot escalate to data it doesn’t need

• Complete, tamper-evident audit trail for every sensitive data access

• Automatic short-lived credential rotation – no 3am incident response for stolen credentials

• Insider threat containment – your engineers cannot read data they shouldn’t

• Demonstrable data minimization for any audit

What you take on

• Vault infrastructure requiring high availability and disaster recovery planning

• 1 – 5ms additional latency per vault call, mitigated by caching and batching

• Developer workflow changes – engineers must reason about token scopes explicitly

• Migration from existing systems is a multi-quarter engineering project

ON MIGRATION

| ON MIGRATIONThe pragmatic path is phased: introduce the vault alongside existing direct DB access (dual-read mode), tokenizing new writes while maintaining read compatibility. Progressively migrate read paths service by service. For a mid-sized microservices deployment, expect six to twelve months. |

The pragmatic path is phased: introduce the vault alongside existing direct DB access (dual-read mode), tokenizing new writes while maintaining read compatibility. Progressively migrate read paths service by service. For a mid-sized microservices deployment, expect six to twelve months.

Frequently Asked Questions

Q: What is a zero-trust data access layer?

An architectural pattern where no internal service gets automatic access to sensitive data. Every access is explicitly requested, cryptographically authenticated, scoped to a purpose and time window, and logged. Access is never assumed – it is earned per-operation.

Q: How is this different from database encryption?

Encryption protects data at rest, but the moment a query runs, data decrypts in memory and is available to anyone with credentials. A zero-trust layer goes further: raw PII never exists in the application database at all, access is scoped per-purpose, and every read is audited.

Q: Does this add too much latency?

The vault adds 1–5ms per call. For most enterprise workflows, this is imperceptible. High-throughput scenarios are handled with caching, batching, and async resolution patterns.

Q: Why does this matter specifically for Indian enterprises?

Indian enterprises handle some of the world’s most sensitive citizen-linked identifiers: Aadhaar numbers, PAN cards, UPI handles, health records. A zero-trust data access architecture ensures a successful breach yields only meaningless tokens – not the raw PII of millions of customers. Securelytix is India’s first indigenous privacy vault, built for this data landscape and deployable on-premise for data sovereignty.

Conclusion: What “No Ambient Access” Buys You

When a service is compromised in this architecture, the attacker gets a short-lived certificate revocable in seconds, scoped access to whatever the service was currently authorized to read, and nothing else. No lateral movement. No direct database queries. No long-lived credentials to use later.

The audit log tells you exactly what was accessed and when. The token store means whatever was exfiltrated from the application database is computationally worthless.

This changes the character of a breach from “attacker has access to everything we have on our customers” to “attacker had a 15-minute window to read shipping addresses for three specific orders.” The first sentence ends companies. The second is survivable.

For enterprises managing Aadhaar-linked identifiers, UPI handles, and financial data at scale, that difference is not an engineering preference. It is an obligation to the customers who trusted you with their data.

How Securelytix Approaches This

Building a zero-trust data access layer from scratch is a substantial engineering undertaking, vault infrastructure, certificate lifecycle management, token schema design, audit pipeline, and the slow work of migrating existing services. Most enterprise teams find the architecture clear in principle but difficult to operationalise without a dedicated platform underneath it.

Securelytix is India’s first indigenous privacy vault, built specifically around this architecture. A few things that are worth knowing about how it works in practice:

| Field-level tokenization | PII fields are tokenized at the point of ingestion – individual columns, not entire tables. You can tokenize Aadhaar numbers in one table without touching the rest of the schema. |

| Policy-based access control | Access policies are defined by service identity, operation type, and declared purpose. The same data field can be accessible for one business reason and blocked for another, without code changes. |

| On-premise and cloud deployments | For enterprises with data residency requirements, the vault can run entirely within your own infrastructure. No customer data transits Securelytix systems. |

| Incremental migration path | Dual-read mode lets you tokenize new writes while existing read paths continue working. You don’t need a big-bang migration to get started , most teams begin with their highest-sensitivity fields. |

| Audit log out of the box | Every detokenization event is logged with caller identity, declared purpose, and outcome. The log is structured, tamper-evident, and exportable for compliance workflows. |

The architecture described in this post is exactly what Securelytix implements, not as a managed service that handles your data, but as infrastructure that runs inside your environment and puts your engineering team in control of access policy.

If you’re evaluating how to approach zero-trust data access for your organisation, the architecture and the docs are a reasonable starting point: securelytix.tech/architecture.